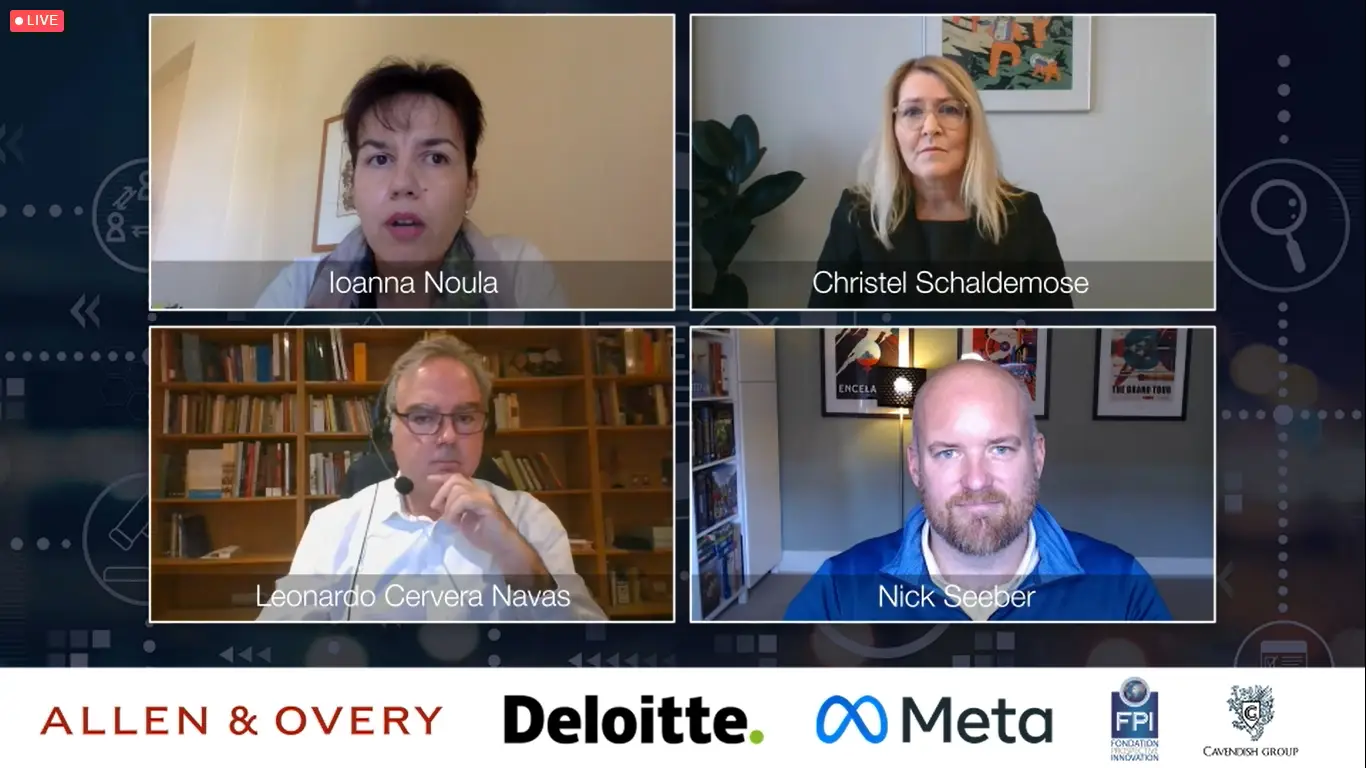

Who should take responsibility for online content: states, companies or consumers? How should we prepare for the metaverse and Web3? At the RAID conference in October 2022, Deloitte Partner Nick Seeber, Allen & Overy Partner Maeve Hanna, Christel Schaldemose MEP and EDPS Director Leonardo Cervera Navas tackled the big questions from Ioanna Noula of the Internet Commission

Ioanna Noula, Senior Leader – Operations, Internet Commission: Nick, how is the internet changing and what’s your view on how the user experience will change over the next five years?

Nick Seeber, Partner & Internet Regulation Lead, Deloitte: Deloitte does a lot of research on digital consumer trends. Recently, we published a global study with about 40,000 consumer respondents which showed that there is a broad lack of trust in the digital space, and that consumers and business users are confused – and they’re looking for governments and platform companies to take a lead in creating trust.

So, in terms of what we can do about it, there are three things which I think we should think about. The first is about making it easier for ordinary users to resolve trust disputes quickly and at a low cost. This is an element of the Digital Services Act (DSA) specifically for content disputes, but I can envisage a big improvement to the trust in platforms if users feel that they can access an arbitration of trust at a reasonable cost.

The second is about the professionalisation of the trust function. So effectively every company which has an internet business model, they’re going to have an equivalent of a chief trust and safety officer or similar. This is a really important function in any internet business, and that will continue to increase in prominence. I would expect that the career path for individuals who are participating in that trust and safety function will be even more important. I think increasing the level of professionalism in that function is a good thing for both users and platform companies, and the trust between them, and the policymakers and regulators.

And then the third thing is in terms of how we can increase the level of democracy in trust policy development. Something I’m very excited about is the ability to use digital platforms to allow ordinary users to participate in policy development, and increasing the level of the ‘wisdom of the crowd’ – the sandboxing of policy development with user input. For me that would increase legitimacy; it would allow users to feel they’ve had a part in shaping the policies which platforms use; it would also show that transparency to the regulators, which is an important facet of lots of the new regulations; and then finally it would expose the complexity, the fault lines and the areas of consensus which are emerging from that process. So I feel that that would be a very exciting development, that digital democratisation of trust with users, business users and platforms participating in a sandbox type environment.

Those are the three things which I think would be a really big improvement.

Ioanna Noula: Maeve, what will be the impact of the soon-to-be implemented legislation across the globe regarding content moderation? I’d also like to bring to attention and pay tribute to Molly Russell and the inquiry into her suicide here in the UK, which concluded that there is a direct link between fatal harms and digital companies’ algorithmic choices. In your view, who is responsible and should be held liable?

Maeve Hanna, Partner, Allen & Overy: That’s a really important and really big question. There are divergent views on it. What we’re seeing at the moment is that the discussion really has moved on from ‘should we be regulating or moderating content?’ to how we should do that. So there is a development and a consensus that there needs to be some form of regulation over content online.

In the context of looking at a changing internet, that does lead to real challenges for how you develop a regulatory framework around developing technology. We’re often talking too late in the day about technology that has been developed and implemented.

Quite often what we’ve seen, where there has been a lacuna between the technology that has been developed and the laws that apply, is a real discussion around ethical frameworks. We saw that with data ethics, AI ethics and we’re now seeing a discussion around metaverse ethics as well. That is important, but not sufficient, to put in place the protections needed.

And back to your question on who can take responsibility for moderating online content: there is a role for individuals. I don’t think there are any doubts around the importance of regulating illegal content and making individuals responsible for the illegal content they upload. But where we are at the moment is a discussion on putting systems and controls in place to keep users safe online, rather than just focusing on individual content. We need to look beyond users to the platforms and online companies, as well as to government.

The online providers are an obvious group to have a role here, either individually or through collective action. Technology companies are best placed to understand the technology. They see the lifecycle of content, how it is uploaded, used, disseminated and shared, and so they have to be part of this discussion – but there is a difference in views and approaches.

We’ve seen that recently in the context of the inquest in UK. We’ve got different approaches to a common issue. If it’s only an individual company-driven approach to regulation, that could lead to a lack of clarity for users, for wider industry and obviously for the companies themselves.

So that then leaves us with states, individually or collectively, taking action. I think really that’s where the focus is at the moment – on regulation. That doesn’t mean it’s easy, but there needs to be an understanding from the politicians and policymakers of how the technology works, in order to devise a system of regulation that works for both the users and the technology companies.

Ioanna Noula: Christel, what are the pain points for policymakers in the process of developing fair and effective measures? And as the internet is changing, will policy continue to play catch-up with the impact of new tech?

Christel Schaldemose MEP: In the EU we have learned that we might have been too slow to regulate platforms when it comes to content. It’s difficult because on the one hand when things are new we would like to give room for innovation and we don’t know what will work and how they will develop, but on the other hand, once we start seeing some tendencies and issues and problems, we need to regulate. I’m sure that with the DSA and the DMA now adopted, we will have basic legislation in place that changes the landscape.

With the DSA, I do believe that we found the right balance between regulating and demanding the platforms to take responsibility for what happens online, but at the same time not compromising freedom of speech for instance, and not destroying the idea behind many of the platforms.

With the DSA, we want the platforms to become more responsible. If they become aware of illegal content it needs to be removed, but we are also moving a little bit into harmful content. That is of course the tricky part, because some harmful things can be legal even though they are harmful.

What we say is that we would like to look at the overall impact of the services, so if they constitute a systemic risk, for instance, if their algorithms are amplifying disinformation or content that could cause harm related to the public health, public security or the democracy, then they have to adjust their systems. We’re not saying what they have to do; we’re saying that they need to do something. They need to risk mitigate.

I think this is the way to do it: we need to keep the arms-length principle in place, but at the same time obliging them to do something. Because the biggest change in the internet is not the services themselves, but that we’re spending more and more time online, and therefore we really need to make sure that what is illegal offline should also be illegal online. In the same manner that we’re regulating the offline world, we need to regulate the online world. But we need to do it in a sensitive, cautious way; we need to strike the right balance.

And I think that is what we did with the DSA and the DMA. Will it be enough to catch up and what about new developments? Well, that’s really not easy to tell. We don’t know what will happen to the metaverse, for instance; will we be able to regulate that in a good manner? We already have the tools in place if the metaverse becomes a reality. We will have to be faster in adjusting; we cannot be as fast as the internet, but we need to be faster than we have been so far.

Ioanna Noula: Mr Cervera Navas, I would like you to take us to the metaverse and ask you whether we’re metaverse ready, and what does this mean for the safety of children and vulnerable people.

Leonardo Cervera Navas, European Data Protection Supervisor (EDPS): So, you are addressing with me the risks of Web3 and the metaverse, but it’s important to say that from the EDPS, we acknowledge that these new technologies bring along huge potential and opportunities – good cowntributions for the future.

I consider that there are four self-evident risks that corporations and governments should contemplate.

The first risk is profiling. These new technologies are supposed to give data subjects more choice and more transparency. But if we consider the way that the successful business models are operating today in Web 2.0, we are more likely to see the opposite. Unless something is done, most likely we will continue to see ubiquitous behavioural tracking for advertising purposes, or paid data sharing by data brokers, anti-competitive business behaviours and proprietary closed standards, and a lot of processing of personal data.

If you think that the metaverse will be more tightly integrated in our daily life, imagine the impact if we continue with these practises in a more integrated metaverse. So we need to be ready to see more profiling, more complex connections of the various stakeholders. This will make it very difficult for citizens to understand what’s going on and how, and before whom, to exercise their rights.

This will also give rise to governance issues. What will happen when service providers from areas with different data protection standards, or even contradictory legal frameworks, connect in a seamless manner? This is a point where data protection authorities will need to cooperate more closely.

The second risk is security issues. One of the main problems is that, for these ecosystems to function properly, we have to allow small scale entities to participate in the ecosystems, and they may not have the resources to ensure that their products are sufficiently safe and protected from cyberthreats. So it is necessary as matter of public policy to introduce resources and incentives, so these smaller scale entities can function equally securely. The recently published EU Cyber Resilience Act proposal would be a good step in that direction.

The third risk is identity management. Our avatars in the metaverse will unlock a completely new world and impact our activities in real life. Therefore it is essential to come with a strong identity management mechanism. We need to ensure that we can recognise who is behind an avatar and prevent identity fraud across different metaverses. But on top of that, any identity management mechanism must take into account the need for anonymity in a specific context.

So far, biometrics have been proposed as a good solution for a strong continuous authentication, but we must remember that the use of biometric technology comes with important risks, because once your biometrics have been compromised the damage is forever, because you cannot change your face or the way you walk. So this has to be used with caution.

The fourth self-evident risk is deepfakes. If we are already starting to be a little bit scared about deepfakes, imagine what could happen if these technologies evolve. They could be a big disruption for the internet of the future. Something needs to be done to stop these deepfakes, from the public policy perspective.

In conclusion, it is clear that these self-evident risks constitute a threat to the sustainability in the medium and long term of the so-called metaverse. If the main actors, the big corporations, do not properly and urgently address these issues, there will be lots of business opportunities lost and we will move from a scenario of win-win to a scenario of lose-lose. They have to look at the big picture and they have to work together on standardisation, on privacy-by-design, otherwise they will be compromising their own possibility to make business in the future, because citizens, courts and data protection authorities will not tolerate the current situation, which is already bad, getting worse in the metaverse.

Follow the rest of the panel discussion here: https://www.raid.tech/raid-h2-2022-recordings